For my final project, I constructed an interactive wall that showcases the multiplicity of narratives surrounding women, their pain, and their conflicted relationships with emotion.

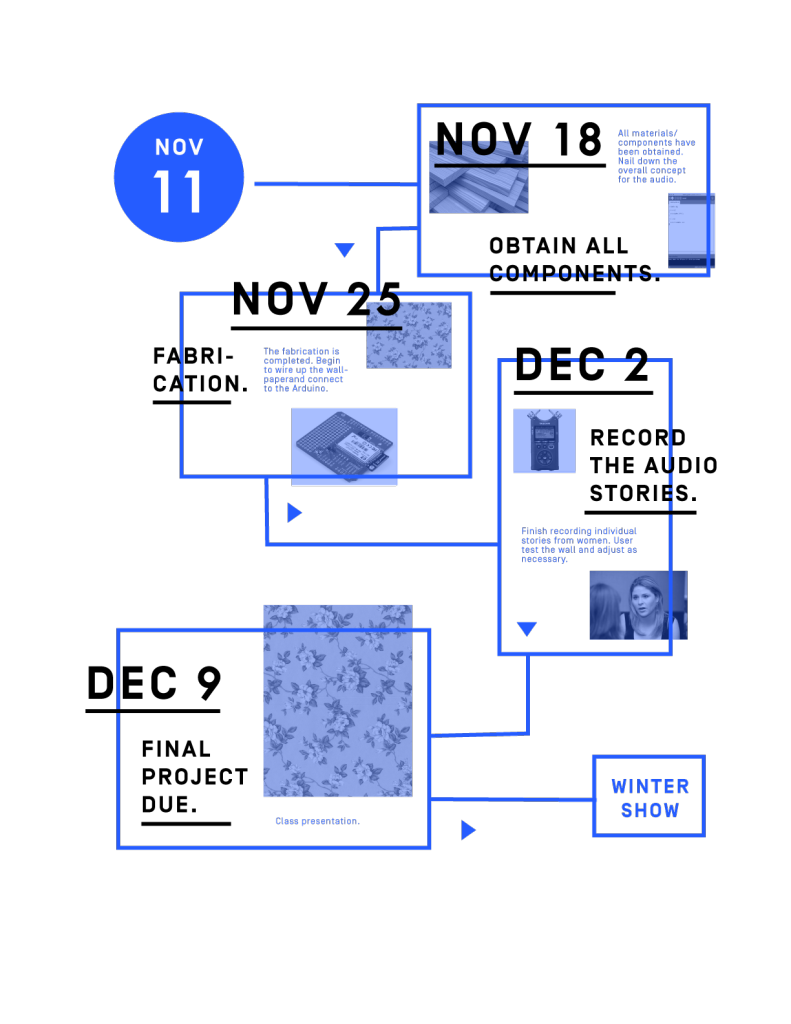

I asked several women in my life to record themselves talking about their relationship with sadness and grief. As you touch various points on the wall, it triggers audio clips from those interviews. You can see the progress of this project here and here.

Check out the video:

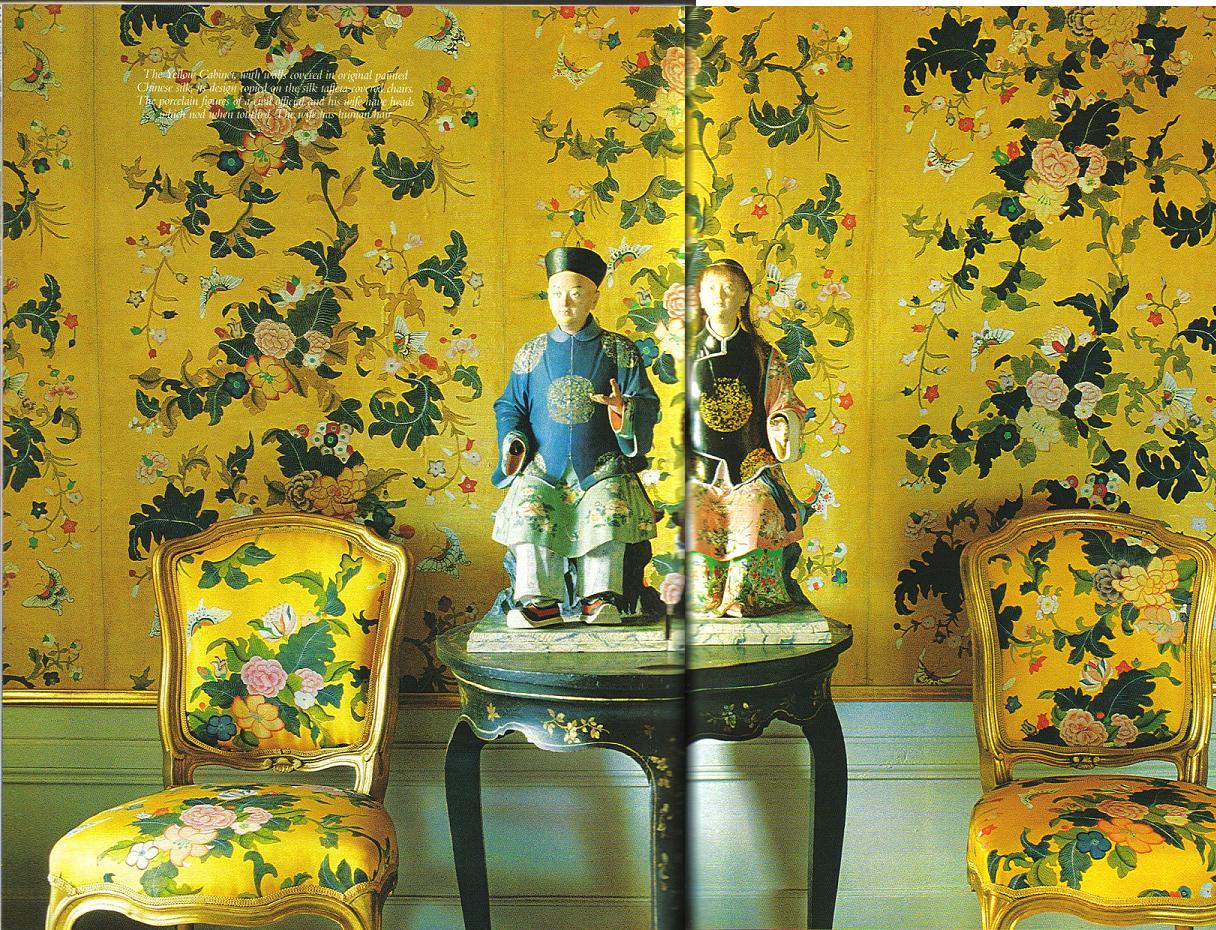

I was initially inspired by Charlotte Perkins Gilman’s short story “The Yellow Wallpaper.” Published in 1892, the story is written in the style of a diary of a woman who, failing to enjoy the joys of marriage and motherhood, is sent to live in a room alone in the country in an effort to “cure” her ineptitude. She wants to write, but her husband and her doctor forbid it. Confined to her bedroom, the patterns on the faded yellow wallpaper come to life for the protagonist and eventually precipitate her descent into insanity.

Here’s a sampling of some of the things that were said:

“I think there’s a stereotype in our culture that emotions are bad and they are weak.”

“I expect myself to push forward and to be better and to move on.”

“I’ve come up with this theory that it’s best to feel whatever emotion feels most urgent at that time and if it’s sadness then so be it. I think that sitting with sadness and getting to know its roots — it’s huge.”

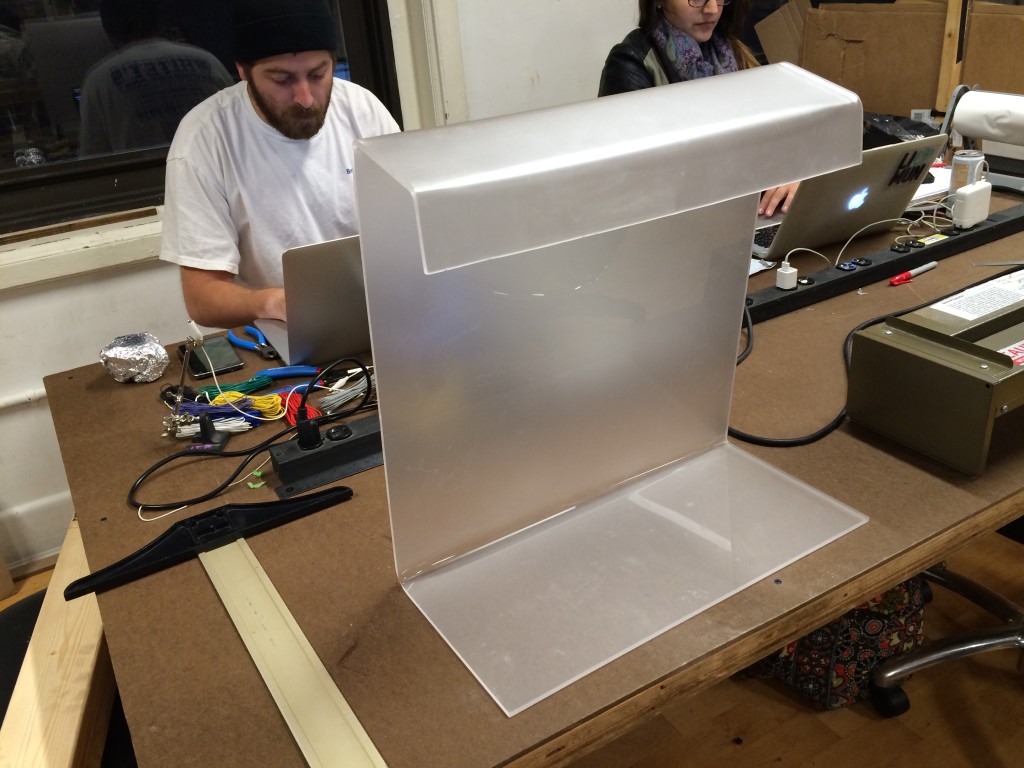

For the fabrication of the wall, I covered a canvas with some vintage floral wallpaper from the 1920s. I liked that wallpaper is something we associate with domesticity, a quality and a space that has historically been associated with women.

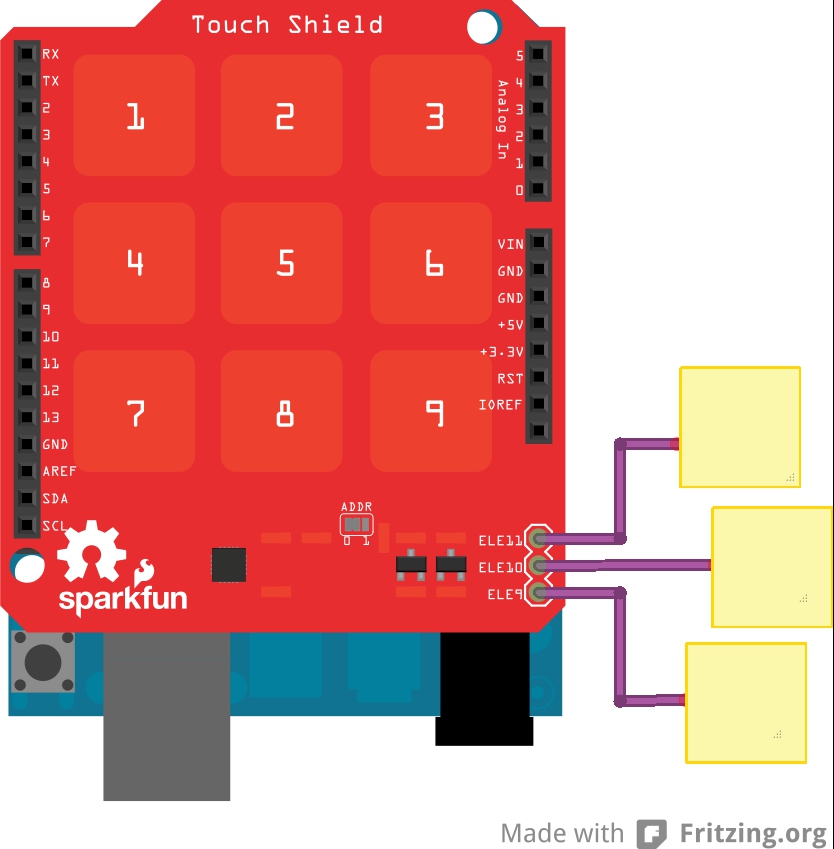

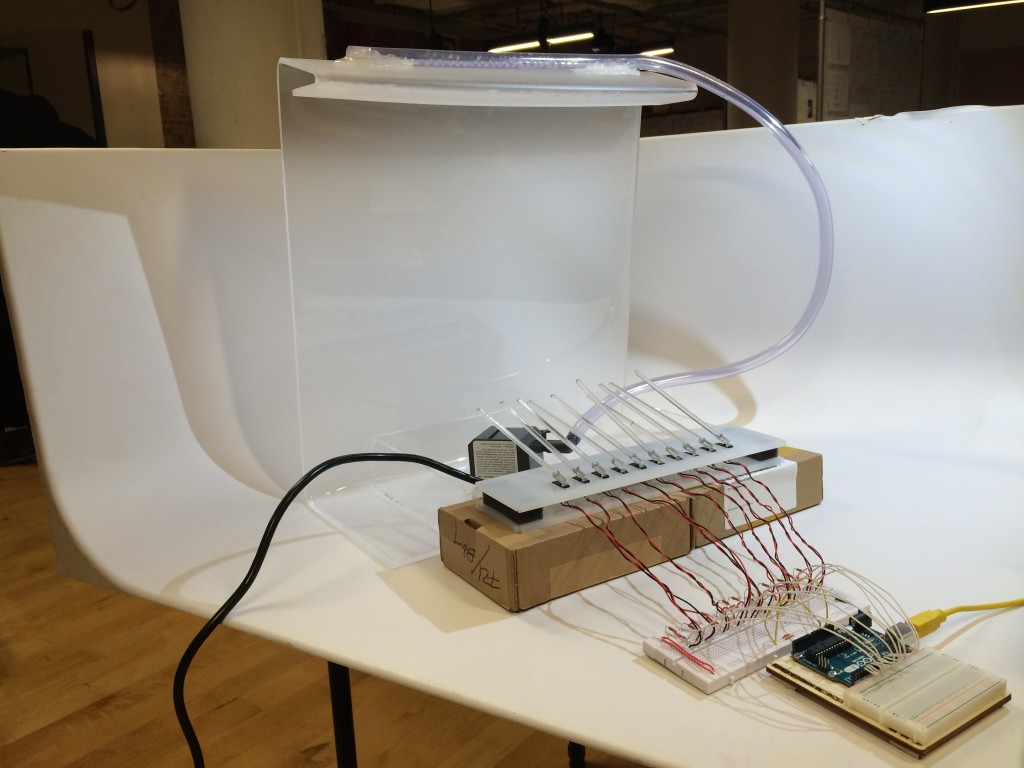

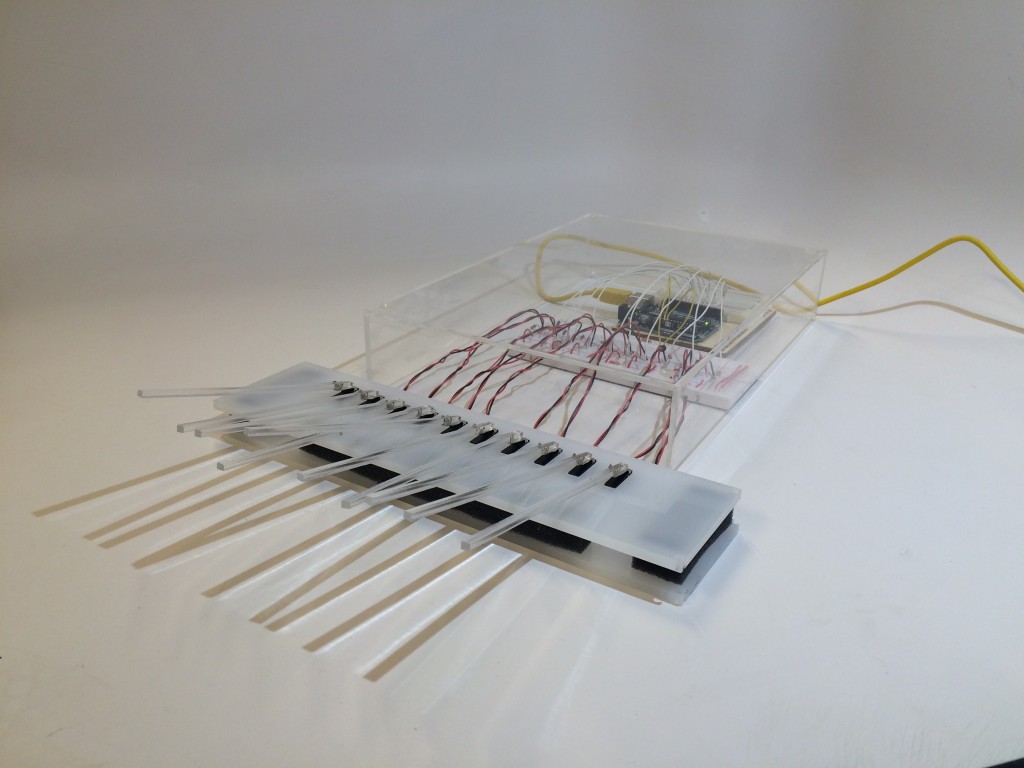

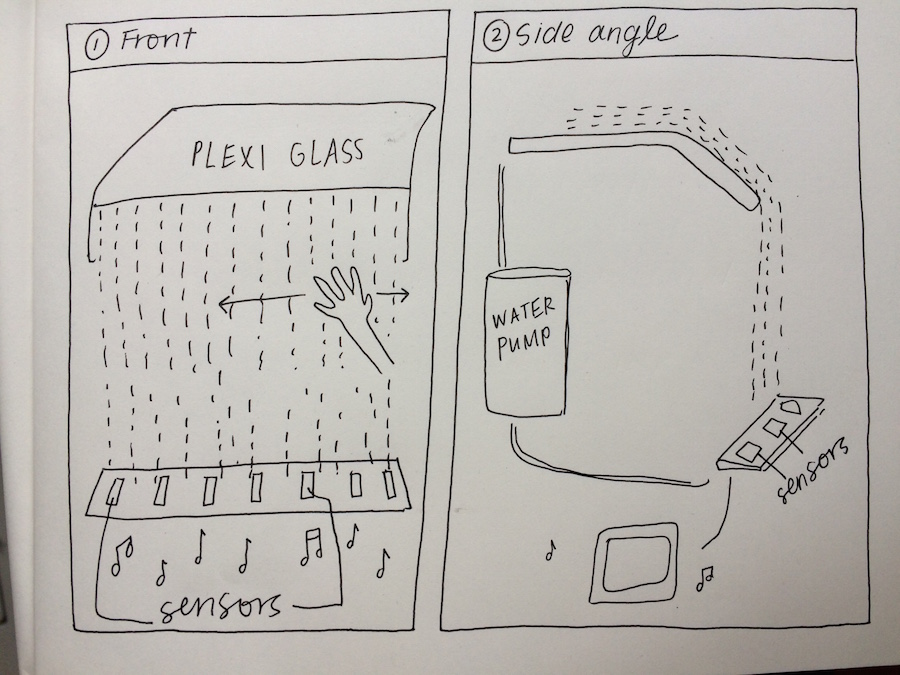

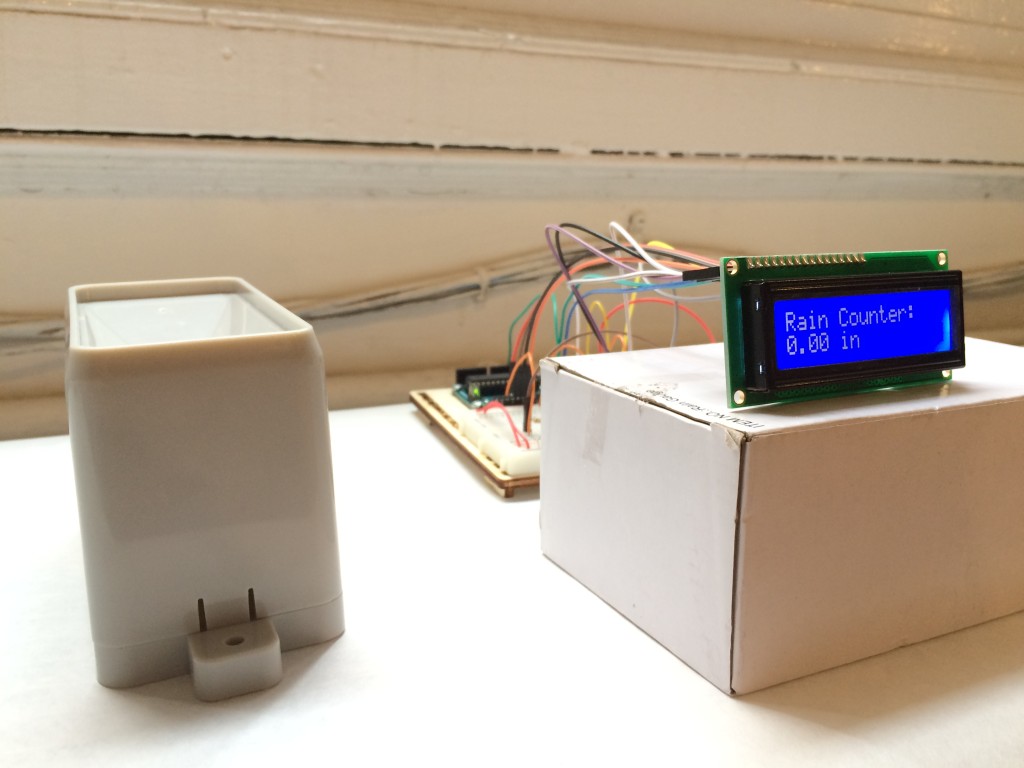

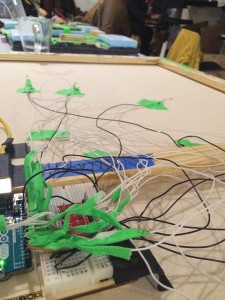

As I mentioned last week, behind the canvas is a web of wires connected to the SparkFun Capacitative Touch Sensor Breakout driven by an I2C interface. The Arduino code was drawn largely from example code I found online at bildr for utilizing the touch sensor. Using what we learned about serial communication, I was able to connect a p5.js sketch that plays the appropriate audio interviews when the corresponding flower is touched.

After play testing the wall last week, I decided to also add LED lights behind each flower that turn off when you are touching them. I also added a pair of headphones in order to create an environment in which the participant/listener feels an intimacy with the speaker.

Overall, I was really happy with the quality of the audio that I got and the simplicity of the interaction. My friends and family who recorded themselves were generous and thoughtful. I think that came through when you listen to the audio.

If I were to do this project again, I would change the fabrication of the wall to make it more elegant and beautiful. Right now the wall has a DIY feel – which I like – but if this were to become a real installation piece it would require some rethinking.